On Zero Trust Programming

Applying the Concept of Zero Trust Security to the Code We Write

Open source software is vital in modern software engineering and yet we as developers are faced with the increasingly difficult task of tracking the security of our dependancies -- both direct and indirect -- all while maintaining velocity.

"Zero Trust" is a security model based on the idea "never trust, always verify." It mandates continuous authentication and validation for every user and device, irrespective of their position inside or outside the network. This is in contrast to traditional IT network security, based on a castle-and-moat model, where no one outside the network is given access but everyone inside the network is trusted and given access by default.

By applying the ideas of Zero Trust to the code we write -- what I'm calling Zero Trust Programming -- we can simplify the process of auditing 3rd party code, plug existing vulnerabilities caused by ostrich security (i.e. head-in-the-sand) and GitHub-star-based-audits, and drastically cut down on application attack surfaces.

The Problem at Hand

A Castle and Moat -- Generated by Midjourney

A Castle and Moat -- Generated by Midjourney

The benefits of using open source software are clear. But with that comes a cost -- the cost of babysitting the security of your dependancies and transitive dependancies.

Imagine you're building an API using Node.js with an endpoint that greets your users with a friendly message. You were going to write the code to generate nice, personalized greetings until you found a package on npm, "justgreetings", that does exactly that. So you install it and run it locally to see how well it works. You make a request and get your personalized greeting!

Unfortunately, in the meantime, that function that you thought was just generating greetings was also: reading in your environment variables (including your OPENAI_API_KEY) and your AWS credential file, sending an HTTP request with that data out to the internet, and then installing a crypto miner in the background.

You could sandbox your application inside a Docker container or use Deno's permission system. Both of these allow you to limit the blast radius by making that castle-and-moat smaller, but as soon as one part of your application needs access to a file (~/.aws/credentials) or an environment variable (OPENAI_API_KEY) everything has access. Why can't we be more granular with our permissions?

According to the Snyk 2022 Open Source Security Report, the average application development project has 49 vulnerabilities and 80 direct dependencies (source). How much time would it take to audit those direct and indirect dependencies and how often would you need to do that? And how much time is left over?

According to the Linux Foundation, between 70 and 90% of any given piece of modern software solution is made up of open source software (source). So, for every line of code you write there are between 2 and 9 times more lines of open source dependancy code to be vetted.

This may be possible for a large company who can dedicate a team to vet 3rd party code but I don't think it's reasonable. And where does that leave the rest of us? From the smaller organization to the fast-moving startup to the indie dev. Over 40% of organizations don't have high confidence in their open source software security (according to the same Snyk report). What if there was a better approach to help bridge that gap?

Enter Zero Trust Programming

A Baby Robot Trapped in a Sandbox -- Generated by Midjourney

A Baby Robot Trapped in a Sandbox -- Generated by Midjourney

Zero Trust Programming (ZTP for short) takes the idea of "Zero Trust" as it relates to network security and applies it to the code you write; this allows you to define granular permissions that can be applied to functions, types, imports, and other scopes as needed. The current system follows the same castle-and-moat paradigm, where once 3rd party code gets imported it has the same trusted access as 1st party code.

Returning to the example of the justgreetings npm package but this time with ZPT, the application could import the 3rd party code without granting it any special permissions and run the code knowing it wouldn't be able to access any special resources. Even though other places in your application may require file system access, network access, or environment variable access, those same permissions aren't automatically granted everywhere within the castle walls of your codebase.

The idea of ZTP doesn't need to stop with 3rd party code, with system resources, or at the package level. You could also define resources that expose their own permissions within your application code. For example, say you have a function for loading database credentials. You could define an associated permission being exposed (e.g. "db_secret_read") which could then be granted elsewhere in the codebase (e.g. grant access to the database client or the list_students function).

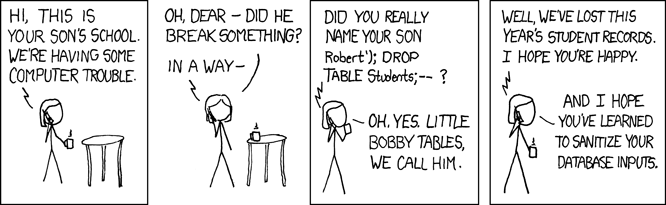

xckd comic "Exploits of a Mom"

xckd comic "Exploits of a Mom"

Adding permissions around 1st party code and resources can help to mitigate another class of vulnerability, code injection (as well as possible non-malicious, buggy code). For example, the function "list_students" would only have "read" permissions on the database and would be prevented from making any changes even if someone tries searching for the student "Robert'; DROP TABLE Students; --".

Finally, in addition to blocking unauthorized access to secured resources within the application, ZTP would be able to generate an audit log that details what permission boundaries are crossed, when, and by whom.

Challenges and Tradeoffs

A Robot Art Thief -- Generated by Midjourney

A Robot Art Thief -- Generated by Midjourney

Of course in the ideal case ZTP would be available in your current language or framework, intuitive, and frictionless but that's easier said than done. Tools like Open Policy Agent and AWS IAM have proven that granular, configurable permission systems can easily become overwhelming. If ZTP is too complex, too hard to understand, or too hard to use, developers either won't use it or will just use a blanket Allow:*. That's a place where TypeScript sets a great example -- allowing for gradual and selective use of ZTP removes the all-or-nothing fatigue. Start by limiting the permissions on some imported 3rd party packages and build on that foundation.

Another concern is the occasional need to break the rules. ZTP needs to be flexible enough to allow developers to use the tool as they see fit. One possible way to handle this might take inspiration from Rust's unsafe blocks. There could be the ability to add a block or scope with elevated permissions, allowing for deliberate rule-bending, and something that a static analysis or an auditor could call out.

What would the performace impact be for a permission system this extensive? Potentially severe but ultimately the answer would come down to implementation. Ideally a fair amount of the cost could be avoided by enforcing rules at build-time or compile-time. And, as cooperation with the runtime would likely be essential, if these rules could be built-in that could also help. At the end of the day, though, there would be at least some amount of slow-down which would have to be weighed against the security benefits. The front door of my house slows me down but I'm okay with that.

One area that I see as the most challenging to work-around would be subprocesses and the FFI. I can't think of a good workaround for vulnerabilities that stem from these types of code. One option, which Deno implements, is the ability to limit which commands can be run but obviously extending the same ZTP security would be difficult if not impossible outside of the application runtime itself. Another approach -- while not a true fix -- would be to strongly encourage those external functions and libraries to be made available as WASM, which could allow for the same performance and versatility of other languages and tools while providing the same level of granular control to the application runtime.

Implementing Zero Trust Programming

A Robot Passing Through a Metal Detector -- Generated by Midjourney

A Robot Passing Through a Metal Detector -- Generated by Midjourney

Since Zero Trust Programming is purely conceptual, it isn't necessarily tied to one language or framework. It could be implemented in an existing language like Python or TypeScript or it could be part of a new language (ztp-lang?).

While a whole new language might allow for a cleaner design it would make adoption difficult. Everything listed in the previous section about gradual adoption would go out the window. Instead, I think the JavaScript/TypeScript ecosystem could be a promising starting point. ZTP could have it's own JS runtime -- like Node, Bun, or Deno -- that implements these zero trust security features.

I don't know what would work best in terms of syntax; maybe it could transpile to JavaScript/TypeScript, like TSX, or use a special decorator syntax. Or it could use configuration comments, like eslint.

It would also be great if ZTP could be "backwards compatable" and permissions could be added after-the-fact, similar to how the TypeScript ecosystem has "@types/" packages which provide type information for JavaScript packages.

Specific syntax aside, the key to making ZTP accessible would be developer tooling -- static analyzers, IDE integrations, debugger support, visualizations of permission trees (e.g. DAG visualizations or flame graphs) -- and there would be a lot of room for integration with existing security tools and frameworks like Snyk and OpenTelemetry (e.g. audit logs).

At the end of the day, though, the community would be most important, when it comes to planning, implementing, integrating, testing, and documenting.

Conclusion

Zero Trust Programming would allow for granular control of the ways in which application components and 3rd party code access sensitive resources both within the application itself and within the broader context of the host environment. Through ZTP, developers would have the tools required to add whatever level of security fits best with their usecase. This is all still conceptual and far from having a practical implementation but I think the idea is at least interesting, if not promising.

Thank you for reading! I would love to hear your thoughts on the idea of Zero Trust Programming. What do you like? What would you change? Do you think it's practical and could be implemented? What's missing? Let me know! You can reach me on LinkedIn, Twitter, Mastodon, or BlueSky!